Trying to prove that a generic drug works exactly like the brand-name version is usually straightforward. But when you're dealing with bioequivalence standards for "highly variable drugs" (HVDs), the math gets messy. In a standard study, the natural fluctuations in how a person absorbs a drug can drown out the actual difference between the test and reference products. If you stick to a traditional 2x2 crossover design for these drugs, you might find yourself needing over 100 volunteers just to get a statistically significant result-which is a nightmare for both your budget and your timeline.

This is where replicate study designs come in. Instead of giving a subject the drug once, you give it multiple times. This allows researchers to separate the "noise" of the individual's biology from the actual performance of the drug. By focusing on within-subject variability, these designs make it possible to prove equivalence with a fraction of the participants, often dropping the required sample size from 100+ down to just 24 or 48 people.

What Exactly are Replicate Study Designs?

At its core, a Replicate Study Design is a specialized bioequivalence methodology where subjects receive multiple doses of either the test formulation, reference formulation, or both across several treatment periods. While a standard study is a simple "A then B" or "B then A" sequence, replicate designs add extra layers of dosing to measure how much the drug's concentration varies within the same person over time.

Regulatory bodies like the FDA and the EMA typically mandate these designs when the intra-subject coefficient of variation (ISCV) for the reference product exceeds 30%. When a drug is this unpredictable, the standard "80% to 125%" acceptance window is often too rigid. Replicate designs enable Reference-Scaled Average Bioequivalence (or RSABE), which essentially widens the goalposts based on the drug's own inherent variability, provided the test drug's variability is similar to the reference.

Choosing the Right Design: Full vs. Partial

Not all replicate studies are built the same. Depending on the drug's profile-especially if it's a Narrow Therapeutic Index (NTI) drug-you'll have to choose between full and partial designs. If you pick the wrong one, you risk a regulatory rejection or a study that lacks the power to prove anything.

Full Replicate Designs are the gold standard for high-stakes drugs. In a four-period sequence (like TRRT or RTRT), subjects get both the test and reference products twice. This gives you a clear picture of the variability for both versions. The FDA specifically requires this for drugs like Warfarin Sodium because the margin for error is so slim.

Partial Replicate Designs are a bit leaner. These usually involve three-period sequences (like TRR or RTR). Here, you're only replicating the reference product. While the FDA accepts this for RSABE analysis, you lose the ability to precisely measure the test product's own variability, which can be a dealbreaker for certain NTI applications.

| Design Type | Common Sequences | What it Measures | Best Use Case | Typical Sample Size |

|---|---|---|---|---|

| Standard 2x2 | TR, RT | Average difference | Low variability (ISCV < 30%) | High (for HVDs) |

| Partial Replicate | TRR, RTR, RRT | Reference variability | Standard HVDs | Moderate (36-48) |

| Full Replicate | TRRT, RTRT, TRT | Both Test & Ref variability | NTI drugs / High variability | Low to Moderate (24-72) |

The Statistical Power Advantage

Why go through the trouble of extra dosing periods? The answer is simple: power. In a standard 2x2 design, if a drug has an ISCV of 50% and a formulation difference of 10%, you'd need roughly 108 subjects to achieve statistical significance. In a replicate design, you could achieve the same result with just 28 subjects. That's a massive reduction in cost and recruitment time.

Industry data shows that for drugs with variability between 40% and 60%, replicate designs maintain 80-90% power with only 24-48 subjects. Without this approach, bioequivalence assessment for many generics would be practically impossible because the number of volunteers required would be prohibitively large.

Practical Pitfalls and Implementation

It sounds great on paper, but executing a replicate study is significantly harder than a standard crossover. You're asking volunteers to come back for more visits, and the data analysis is far more complex. If you're planning a study, keep these three traps in mind:

- The Dropout Domino Effect: More periods mean more chances for a subject to quit. Industry data suggests a 15-25% dropout rate for multi-period studies. If you need 24 evaluable subjects, you should over-recruit by at least 20-30% to avoid having to restart your recruitment phase.

- Washout Window: Because you're dosing more frequently, your washout periods must be airtight. If the drug from Period 1 is still in the system during Period 2, your variability data is corrupted, and the regulator will likely toss the whole study.

- Software Hurdles: You can't just use a basic spreadsheet. You need tools capable of handling mixed-effects models. The replicateBE package in R has become an industry standard, but the learning curve is steep-often requiring 80 to 120 hours of specialized training for analysts to master.

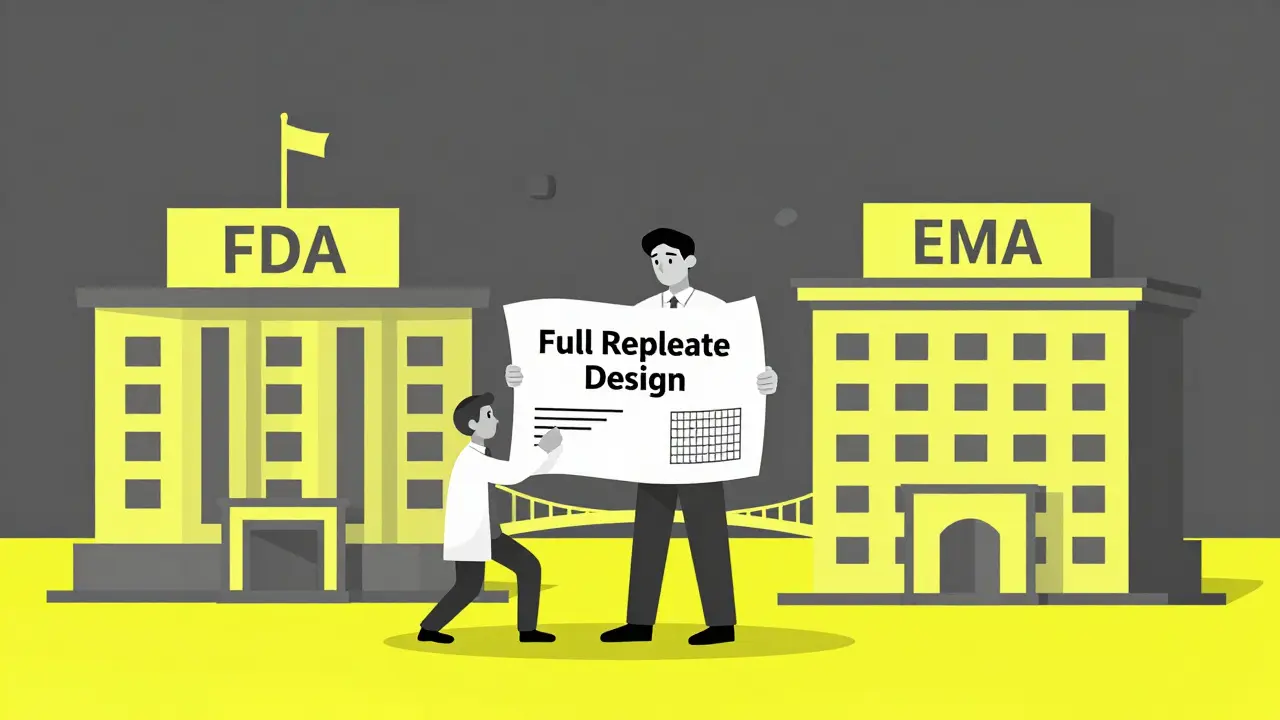

Regulatory Trends: FDA vs. EMA

While both the FDA and EMA support reference-scaling, they aren't always on the same page. The EMA tends to be more flexible with three-period designs (TRT/RTR), while recent FDA draft guidances suggest a push toward standardizing four-period full replicate designs for any drug with an ISCV over 35%.

This divergence creates a headache for global launches. Some companies have seen rejection rates 23% higher when submitting FDA-preferred designs to the EMA. The general rule of thumb is to lean toward the more rigorous full replicate design if you intend to file in both jurisdictions; it's safer and more likely to satisfy both agencies.

When is a replicate design mandatory instead of a 2x2 crossover?

Generally, when the intra-subject coefficient of variation (ISCV) of the reference product is greater than 30%. In these cases, standard designs require an unfeasibly large number of subjects to achieve statistical power, making replicate designs the only practical option.

What is the main difference between partial and full replicate designs?

A partial replicate design only replicates the reference product, allowing you to estimate reference variability for RSABE. A full replicate design replicates both the test and reference products, which is necessary for Narrow Therapeutic Index (NTI) drugs where both variabilities must be precisely known.

How does RSABE change the bioequivalence limits?

Instead of the fixed 80-125% window, RSABE scales the acceptance limits based on the measured variability of the reference product. If the reference product is highly variable, the limits are widened, provided the test product's variability is similar.

Why are NTI drugs treated differently in these studies?

Narrow Therapeutic Index drugs have a very small window between a therapeutic dose and a toxic dose. Because of this, regulators require full replicate designs to ensure that the test drug doesn't introduce any additional variability that could lead to patient instability.

What software is recommended for analyzing replicate BE data?

The most common tools are Phoenix WinNonlin and the replicateBE package for R. These are preferred because they can handle the complex mixed-effects models required to separate within-subject variability from the average treatment difference.

Next Steps for Study Planning

If you're moving from the planning phase to execution, start by auditing your expected variability. For ISCV under 30%, stick with the 2x2 crossover. If you're in the 30-50% range, a three-period full replicate design usually offers the best balance of power and cost. For anything over 50%, or for NTI drugs, go straight to a four-period full replicate design to avoid regulatory pushback.

Finally, don't underestimate the recruitment phase. Given the higher subject burden and longer study duration, your screening process needs to be rigorous. Look for volunteers with a history of completing multi-visit trials to minimize the costly dropout rates that often plague these advanced BE assessments.